15 August 2019

“Scotty, energise!” It’s with this legendary command that Captain Kirk accelerates the USS Enterprise up to the speed of light. Energy isn’t just going to play a role in the future but has also been a key driver behind a number of milestones in human history. In this brief history of energy, we relate how it all began and how the provision of energy has evolved over the centuries.

For thousands of years, if people wanted to light or heat their homes, the fuels of choice were peat, wood or charcoal, wax, oil, tallow or whale oil. At the dawn of history, people would either wander alone or in small groups through forest and bog to gather wood or cut peat. But the appearance of settlements, villages and towns led to a division of labour: town dwellers would now get their energy source from their particular trusted fuel seller. At the same time, it was always clear that energy supply was the responsibility of everyone. If you neglected to lay in stocks of fuel, you would have to sit in the dark and cold. It was only in the 19th century that this started to change.

It was on 28 January 1807 that new street lamps first bathed Pall Mall in London in a warm yellow light which shone brighter than kerosene, whale oil or rapeseed oil. The magic fuel behind this was town gas, which was directed into the lamps via pipes. This highly toxic gas mixture was generated by coal gasification, according to a method discovered by Irish clergyman and naturalist John Clayton way back in 1684. But it wasn't until about a century later, in 1792, that Scotsman William Murdoch first produce massive coal gas, initially to light his own house, then to illuminate the entrance to the police headquarters in Manchester and later a factory site in Birmingham, all with the aid of gas lamps. The first gas company, the Chartered Company, was approved by the British Parliament in 1810. By 1819, there were already 486 kilometres of gas pipelines in London supplying more than 50,000 lamps. Energy supply was initially centrally managed: the energy produced on a large scale in one place and delivered via pipelines to where it was to be used. The job of lighting the gas lamps in the evening initially fell to lamplighters, but this process, too, was later automated

© Hängelaterne Franz Kapaun, WStLAGas lights conquer the cities.

Progress at a hazardous price

Hanover was the first German city to build a gas works, in 1824. A year later, gas lamps would also light up Berlin’s prestigious boulevard, Unter den Linden, for the first time. By 1850, 26 German cities, including Stuttgart, Hamburg, Düsseldorf, Munich and Mannheim. were operating gas works. Their inhabitants celebrated this coal gas as a technological marvel. Not only did it brighten the streets, but it could also be used to heat ovens, cook food and operate engines. Gas lighting also soon made for more congenial brightness than candles or oil lamps in theatres and apartments. And yet, it came at a dangerous price: if things went wrong, the combination of gas and open flames proved to be highly explosive and a major cause of fires. Catastrophic accidents were commonplace. In 1881, more than 380 people were killed in the greatest catastrophe recorded to date, a fire in Vienna's Ringtheater.

Electrifying alternative

Around this time, however, a highly exciting alternative to gas lighting was successfully being put through its paces: As early as the 1840s, it had proved possible to supply arc lamps with electricity via magnetic-electric machines. For this purpose, a rotor was equipped with the most powerful magnets possible. Magnetic fields generated by rotational motion initiated a flow of electrons into the adjacent electrical conductors. In this way, the kinetic energy was converted into electrical energy. At this time, however, the procedure was well short of maturity. The permanent magnets required were expensive and heavy and not suitable for generating large currents.

© gemeinfreiIn the early days of industrialisation, the steam engine was used to generate kinetic energy. This needed to be routed to the individual machines via transmission belts.

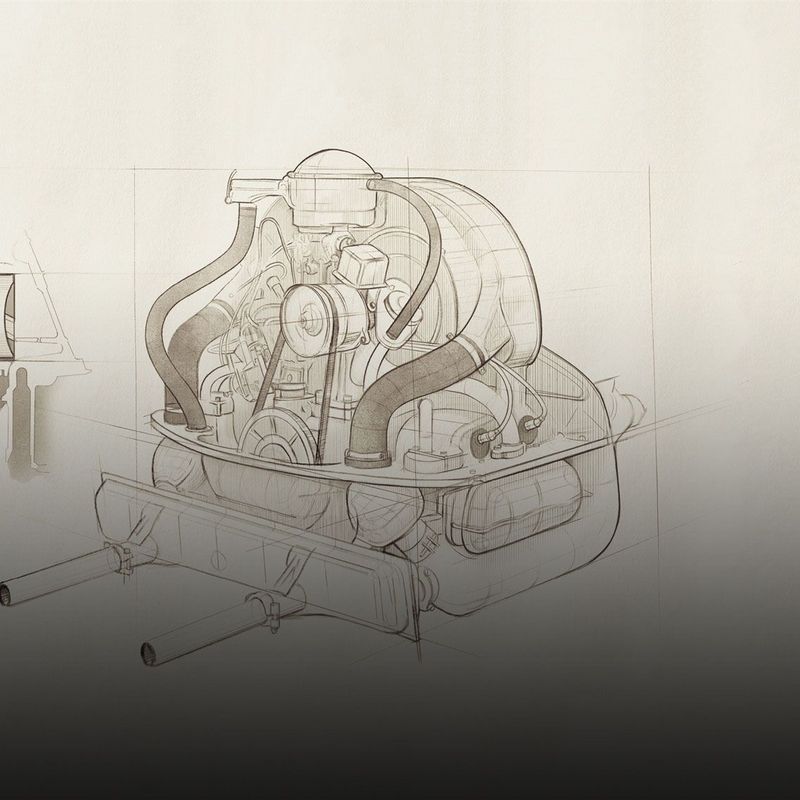

The breakthrough first came with the discovery of the dynamoelectric principle: In 1851, Slovak Ányos Jedlik succeeded in generating electricity with an electric machine without a permanent magnet, followed in 1854 by Søren Hjorth from Denmark, who was granted a patent for this invention in the same year. Completely independently of these pioneers, Werner von Siemens also identified the principle in 1866, but his unique contribution was to develop it for mass production in his machines – effectively jump-starting the electrical age. The difference was that his dynamo machines worked in both directions: As generators, they transformed the kinetic energy of steam engines, water wheels or water turbines into electricity; as electric motors, they converted electricity into kinetic energy to power machines in factories.

The Fairy-Tale King and the Dynamo

It was in 1878 that a steam engine first brought 24 Siemens generators to life in the machine room of Schloss Linderhof in Bavaria. The dynamos supplied carbon arc lamps with electricity, thus finally bathing the man-made Venus Grotto in the lustrous glow that fairy-tale king Ludwig II had always dreamed of. This plant is considered to be the first permanently installed power station in the world. Two years later, Thomas Alva Edison patented his improved incandescent lamp. In 1882, the first public power stations were built in New York and London. Germany’s first public electricity plant began operations in Berlin's Markgrafenstrasse in 1885. By the end of the year, it was supplying electricity to 5,000 lamps, for the most part in public buildings. Three years later, the capital's first electric streetlamps were switched on Leipziger Strasse at the junction with Potsdamer Platz.

To begin with, electric light was accordingly a luxury reserved for grand boulevards and squares, office buildings and the living rooms of well-heeled citizens.

To begin with, electric light was considerably more expensive than town gas and gas lamps – and was accordingly a luxury reserved for grand boulevards and squares, office buildings and the living rooms of well-heeled citizens. But the benefits of electricity for industry were becoming increasingly apparent, allowing as it did for the separation of generators and consumers, i.e. power plants and users, and generators and machines, more effectively than gas-powered motors.

Progress with long-distance supply

Until the beginning of industrialisation, energy production was almost entirely local: If you wanted to saw wood or grind grain, you had to build your mill where the wind blew or the water flowed. With the invention of the steam engine, energy could then in principle be generated anywhere on earth. But pumps, saws and weaving machines remained connected to steam engines as if by an umbilical cord. After all, it was the steam engine which converted heat into kinetic energy – and this could not simply be sent down a pipe. It had to be transmitted mechanically to the individual machines in the production hall via transmission belts made of leather or steel. This was complex, prone to breakdown and inefficient, and resulted in many serious accidents. By combining electricity and an electric motor, machines could be uncoupled from the energy generators – or rather, the umbilical cord could be extended considerably with the addition of an electrical cable. This was a further advantage of electricity: it could be transported over long distances with very little loss.

In 1882, Werner von Siemens tested the ancestor of the modern trolley bus in Halensee near Berlin. In the same year, a long-distance electric power supply line was installed for the first time between Munich and the town of Miesbach, 57 kilometres away. The year 1891 saw the first transmission of high-voltage three-phase current between Frankfurt am Main and Lauffen near Heilbronn. The transmission loss over the length of the 176-kilometre overhead line was just 25 percent – a real sensation at the time. With the later introduction of higher voltages, it proved possible to reduce the loss further to just four per cent.

Efficient range increases

With the implementation of three-phase current, the efficient range of power plants improved enormously. Electricity could now also be delivered remotely to large rural areas, and the power plants could now be built directly adjacent to sources of raw materials such as lignite mines, reservoirs and rivers. In 1895, the world's first large-scale power plant was connected to the grid in Niagara. Three years later, what was then the largest hydroelectric power station in Europe began operations in Rheinfelden on the Swiss border.

The invention and development of the steam turbine at the turn of the 20th century made it possible to harness the kinetic energy of steam more efficiently.

Coal-fired power stations, such as the Deptford Power Station in London, installed in 1891, were initially powered by steam engines. However, their efficiency was lamentable, at a mere ten percent. After all, the complicated mechanism involved meant that they had to convert the back-and-forth movement of pistons into rotary motion, which then drove the power generators. The invention and development of the steam turbine at the turn of the 20th century made it possible to harness the kinetic energy of steam more efficiently. Power plant efficiency leapt up to 30 percent, the classic steam engine was rendered obsolete, and coal-fired power plants became bigger and more efficient.

Electricity grids begin to merge

In the next few years, electrical plants would proliferate like mushrooms. By 1900, 652 power plants with a capacity of 230 megawatts were connected to the grid. Just six years later, their number had doubled, and the total output had tripled. By 1913, there were 4,040 electric plants with a total of 2,100 MW. In the 1920s, electricity also made its way into the more rural areas, courtesy of 3,372 power plants with an output of 5,683 MW and high-voltage power lines, which transported ever-higher-voltage electricity over ever greater distances. With the advent of the north-south power line between Pulheim in North Rhine-Westphalia and two hydropower plants in the Black Forest and Austria, the first interconnected network was created in 1924, linking previously isolated power grids to an overarching network with a a total of 600 kilometres of power lines.

© TÜV NORDEnergy as far as the eye can see: A view of the construction site for the Brokdorf nuclear power plant.

Energy without end

During the economic miracle after the Second World War, not only did the appetite for lavish meals grow but also the hunger for electricity: fridges, washing machines, electric cookers, mixers, hair dryers and drying hoods were making their way into households and needed electricity to run. Increasingly large power plants were expected to meet the growing demand for energy. At the same time, a great deal of intensive work was going into completely new technologies for electricity generation.

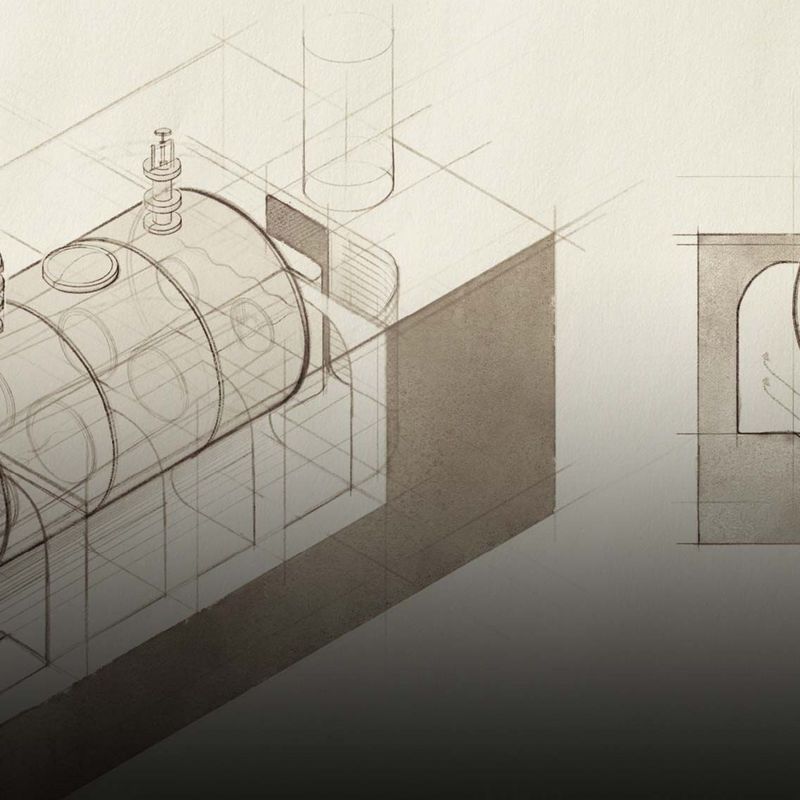

The most promising candidate seemed already to have been found: “The power of the atom could lead humanity to an unprecedented level of civilization and culture,” was the promise of a promotional film from the 1950s. The simple calculation for the scientists was this: one kilogramme of uranium contained as much energy as two million kilogrammes of coal. And uranium was available in nature in almost unlimited quantities and could even be extracted from seawater. Energy without end – the promise of an inexhaustible source of energy united all political and social camps in shared euphoria. Leading German nuclear scientists, however, warned against nuclear armament in the Göttingen Manifesto in 1957, at the same time pleading for “the peaceful use of nuclear energy to be promoted by all possible means.” In 1959, in its Godesberg programme, the SPD also expressed the hope that “in the atomic age, man will make his life easier, be liberated from worries and create prosperity for all, as long as he can use his daily growing power over the forces of nature only for peaceful purposes.”

Electricity from the atom

In the same year, the Adenauer government created the legal basis for the construction of nuclear test facilities in the Atomic Energy Act. The first commercial nuclear power plant in Obninsk, Russia, had already been in operation for five years. The first experimental reactors had also started up in Germany. As early as 1957, the state of Schleswig-Holstein commissioned what was then TÜV Hamburg to survey and inspect the Geesthacht 1 research reactor. A few years later, TÜV Hannover was charged with ensuring the safety of the research reactors of the “Physikalisch-Technischen Bundesanstalt” (“Federal Physical-Technical Institute”) in Braunschweig. From 1966, the experts were commissioned with the supervision of the construction of the Lingen demonstration power plant in Emsland.

© TÜV NORDTesting functionality in the control centre of a power plant.

For the authorities, the experts from the Technical Monitoring Associations were the logical choice for this new task with the onerous responsibility it entailed. After all, they had been monitoring and ensuring the safety of potentially dangerous machines and technical equipment since the advent of the steam engine, nearly 100 years before. During that time, the engineers from the associations had been constantly engaged in familiarising themselves with new technologies and the possibilities of mitigating the associated risks: from automobiles to electricity and the machines and lifts that powered them. Nor were the monitoring associations unprepared for the dawning of the atomic age. As early as 1956, they began to train experts in the basics of nuclear technology and radiological protection.

Nuclear safety a matter of teamwork

The special challenge of nuclear technology lay in the fact that technical guidelines to ensure the safety of the new power plants were in place in the USA but not in Germany. It was up to the experts to draft them. Whereas the testing of steam engines or electrical equipment focused on occupational health and safety, the high risk potential of nuclear energy required the protection of people in the vicinity of the plant from unplanned releases of radioactivity. To guarantee this special protection, the Atomic Energy Act required plants and their components to be assessed in line with the very latest advances in technology and science. What this meant in practice was that the assessment always had to take into account the latest findings from science and research. And the high risk potential meant that any approach involving learning from mistakes was out of the question. After all, the worst possible accident, total core meltdown, would be nothing short of a catastrophe.

© TÜV NORDDelivery of the reactor pressure vessel to the Brokdorf nuclear power plant in 1984.

The experts were thus required to anticipate any possible problem. The crucial questions here were what might happen as a result of the failure of the system and what could cause such a failure. To expect a single expert from one field to find an exhaustive answer to this vital question was unrealistic. This required a team of experts from a wide range of disciplines: electrical and mechanical engineers, construction supervision specialists, material testing engineers and those who were familiar with containers and pipes which carry steam and are subject to pressure worked with specialists in reactor physics and radiation protection technology. In the case of specialist opinions on nuclear matters, the tried-and-tested four-eyes principle for the monitoring of the individual inspection activities became the eight-eyes principle. Because not all the TÜV were familiar with all types of nuclear power plants, the associations founded the Institute for Nuclear Safety in 1965 to offer support in respect of certain technical questions.

Nuclear power to counter dependence

Until the 1970s, domestic coal and cheap oil were easy to come by in the Federal Republic. The shock came with the 1973 oil crisis. In order to reduce dependence on raw materials from abroad, efforts to promote nuclear power were considerably ramped up: in 1974, eleven nuclear power plants were in operation in Germany, eleven more were under construction, and six had been commissioned. TÜV Norddeutschland was commissioned by the nuclear regulators to monitor the planning, construction and operation of the Stade, Unterweser, Krümmel, Brunsbüttel and Brokdorf plants; TÜV Hannover assumed responsibility for the nuclear power plants at Würgassen, Grohnde and Emsland. Also subject to inspection were nuclear installations, for example for the production of fuel elements for nuclear plants and research facilities.

© TÜV NORDAll kinds of energy production revolve around the conversion principle: in nuclear plants, this focuses on the turbine.

Resistance grows

As the Cold War intensified at the end of the 1970s, the peace movement also stepped up its resistance to nuclear technology and the attendant dangers. On 28 March 1979, a serious incident occurred at the Three Mile Island nuclear power plant in Pennsylvania, which further intensified the debate in society about the consequences of nuclear technology. Tens of thousands of people demonstrated the following year against the planned nuclear waste disposal site in Gorleben. The nuclear disaster at Chernobyl on 26 April 1986 finally marked the decisive turning point. Italy was the first country to act and, after a referendum, shut down all four nuclear power stations in 1987. In Germany, four previously built stations had come on stream by 1989. However, no new nuclear power plants were planned. In 1990, North Rhine-Westphalia decided to completely phase out nuclear energy. The Fukushima meltdown in March 2011 heralded the end of nuclear power at the level of the federal German government: On 30 June 2011, the Bundestag decided to retire eight nuclear power plants and phase out nuclear energy by 2022. In that year, nuclear power generation in Germany will come to an end end after 60 years.

Back to the wind

Alternatives to coal and nuclear power were already beginning to spring upon hills and on the coasts years before Chernobyl. In the wake of the oil crises of the 1970s, the state was looking for other ways to produce electricity without foreign raw materials. In the process, they hit upon one of the oldest forms of energy known to man: the wind. Scotsman James Blyth had shown as long ago as 1887 that it could be used for more purposes than just to drive sails or mills. The engineer used a wind-powered system to charge batteries to light his holiday home – albeit only at modest efficiency levels. Danish meteorologist and inventor Poul la Cour improved the aerodynamics of wind turbine architecture around 1900 and made attempts with his wind turbines to supply rural areas excluded from the electricity grid with their own electricity. Over 250 such installations were in operation in Denmark during the First World War. In the early 20th century, wind-powered motors were built for regional energy production in other places, too - only to disappear from the nation’s farms and villages with the advent of nationwide electrification in the 1920s. It wasn’t until 50 years later that wind energy gradually got going again, with research ongoing into a wide range of designs. The "Growian" on the North Sea near Brunsbüttel turned out to be a fatally flawed design in 1984. And yet, just three years later, on 24 August 1987, the first research wind farm in Germany was opened on the North Sea coast in Kaiser-Wilhelm-Koog. The performance and size of the wind turbines grew slowly at first before taking off. In 1988, a wind turbine would measure 70 metres in height and produce 80 kW. Twenty years later, 200-metre-high wind turbines would now dwarf Cologne Cathedral and were 75 times more powerful than their predecessors. At the end of 2013, the power output of global wind power left nuclear energy in its wake for the first time.

© TÜV NORDType testing of the tower and foundation of a wind turbine by TÜV engineers on the coast of Schleswig-Holstein.

Remote monitoring

The experts from the TÜV have long been working to ensure that the turbines are able to cope with wind and weather, that the ground can carry their enormous weight and that the sound of their rotors does not represent an undue nuisance for local residents. The wind turbines are now regularly inspected by the experts, covering everything from tower construction through to the foundations, the rotor blades and the electrical systems and the mechanical engineering. Before construction, they assess whether a planned plant will be capable of delivering on its promises. Not only in Germany or Europe, but also, for example, in the US or China. This matters because the Middle Kingdom has been the world wind energy champion for years and is currently producing 208 GW more electricity from wind than the US (103 GW) and Germany (62 GW), the two runners up, combined. Additional wind farms with a total of 100 GW are scheduled to be added by 2025.

The experts have developed early warning systems to allow them to know at all times what the condition of a wind turbine is. Sound sensors measure the vibrations on wind turbines, and the data obtained can be used to predict an impending breakdown. This remote monitoring gives operators the opportunity to respond with countermeasures as early as possible. After all, when the wind blows, the rotors are supposed to rotate reliably – in this way turning kinetic energy into electrical energy and keeping the lights on and dinners warm in the oven without the user having to leave the house.